Active Vision in Immersive Virtual Reality Environments

Decades of screen-based 2D paradigms in cognitive psychology have provided us with an abundance of insights into the human mind. How do these hold up in naturalistic, navigable 3D environments with realistic task constraints? We investigate this question in our virtual reality lab.

Beyond that, VR enables us to use paradigms that would have never been feasible on screen or even in real-world settings: have participants lift a fridge with one hand, switch the locations of objects in scenes behind their back, or have them throw darts over the edge of a dangerous cliff in a storm — easy to implement in our virtual environment.

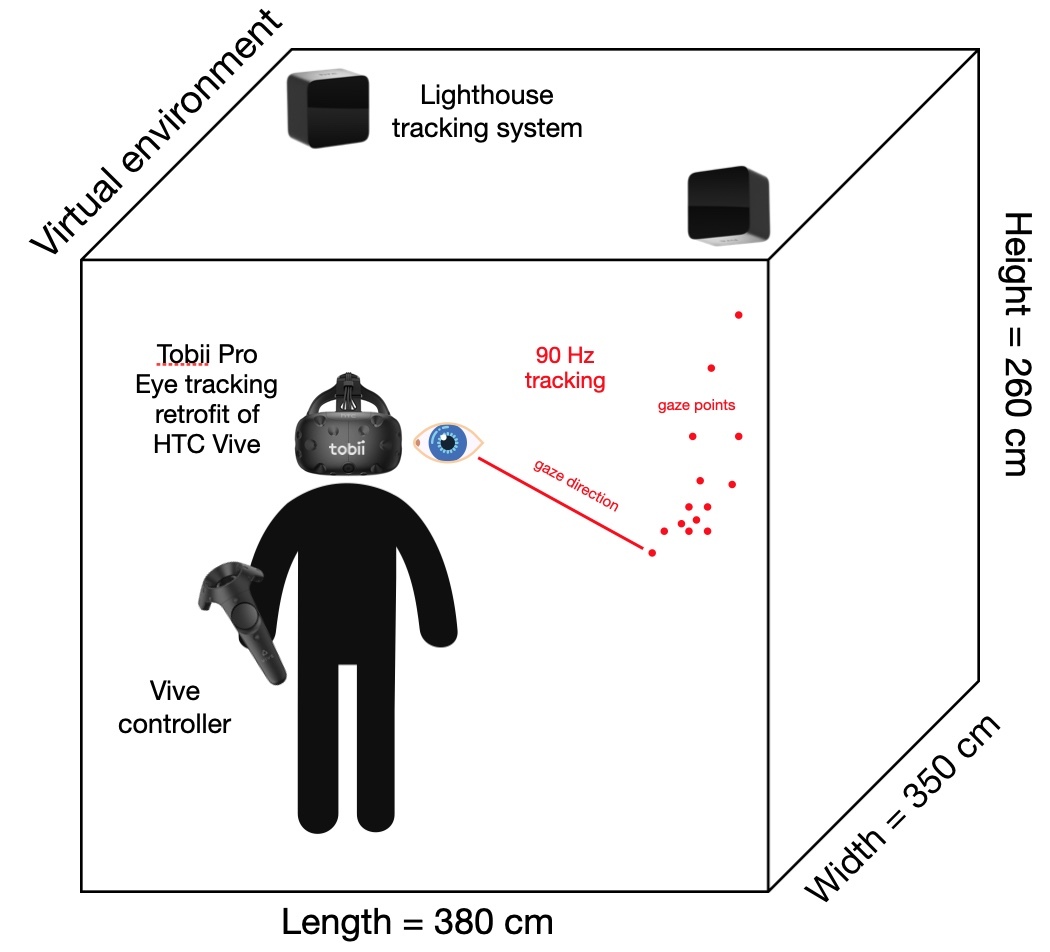

Combining VR with eye tracking, furthermore, allows us to observe eye movements in real-world scenarios while maintaining full control over the environment. The VR eye tracker also lets us use unprecedented 3D gaze-contingent paradigms.

David, E. J., Beitner, J. & Võ, M. L.-H. (2021). The importance of peripheral vision when searching 3D real-world scenes: A gaze-contingent study in virtual reality. Journal of Vision, 21(7), 3. doi: doi.org/10.1167/jov.21.7.3 pdf

Beitner, J., Helbing, J., Draschkow, D., & Võ, M. L.-H. (2021). Get Your Guidance Going: Investigating the Activation of Spatial Priors for Efficient Search in Virtual Reality. Brain Sciences, 11(1), 44. doi: https://doi.org/10.3390/brainsci11010044 pdf

David, E., Beitner, J., & Võ, M. L.-H. (2020). Effects of Transient Loss of Vision on Head and Eye Movements during Visual Search in a Virtual Environment. Brain Sciences, 10(11), 841. doi: doi.org/10.3390/brainsci10110841 pdf

Helbing, J., Draschkow, D., & Võ, M. L.-H. (2020). Search superiority: Goal-directed attentional allocation creates more reliable incidental

identity and location memory than explicit encoding in naturalistic virtual environments. Cognition, 196, 104147. doi: doi.org/10.1016/j.cognition.2019.104147 pdf

Draschkow, D., & Võ, M. L.-H. (2017). Scene grammar shapes the way we interact with objects, strenghtens memories, and speeds

search. Scientific Reports, 7(1), 16471.

doi: doi.org/10.1038/s41598-017-16739-x pdf